Why the “Next Stack” demands a total reimagination of the enterprise

Modern GPU-accelerated compute has birthed a new generation of infrastructure that goes well beyond the data center and beyond the LLM itself. Is The Next Stack emerging — an operating system for AI workloads that spans models, data intelligence layers, middleware, frameworks, tools, and runtimes, and could enable compound AI systems and intelligent applications?

Key speakers

- Ganesh Bell: Managing Director, Insight Partners (Moderator)

- Alexis Bjorlin: VP/GM, DGX Cloud, NVIDIA

- Ian Webster: CEO, Promptfoo

- Jody Clayton: GVP Advanced Technologies, Oracle

- Naveen Rao: Advisor, Databricks

Key takeaways

- GPU compute is not an incremental “faster cloud” — it is a fundamental departure from deterministic computing, requiring a complete reimagination from silicon to application.

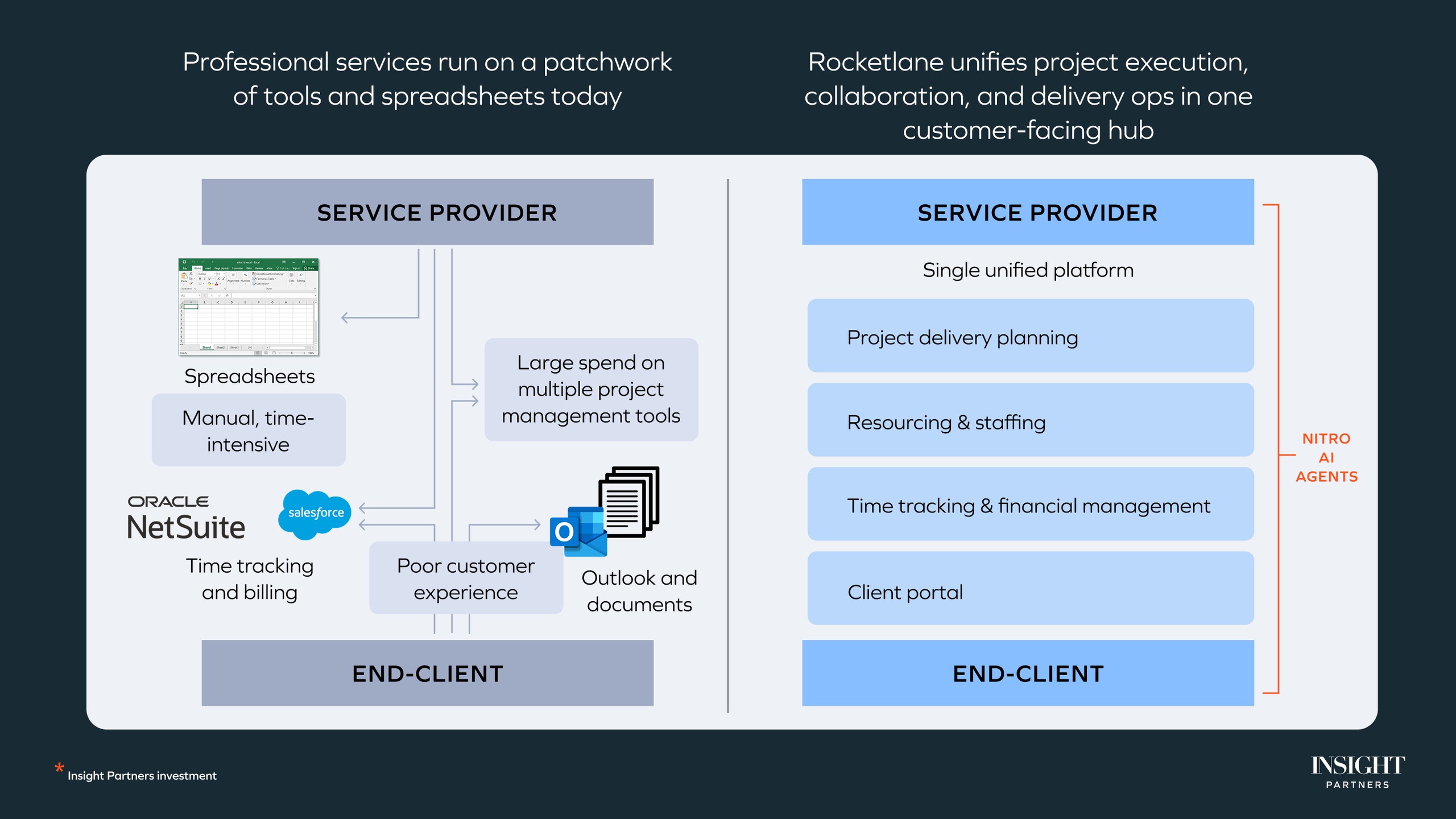

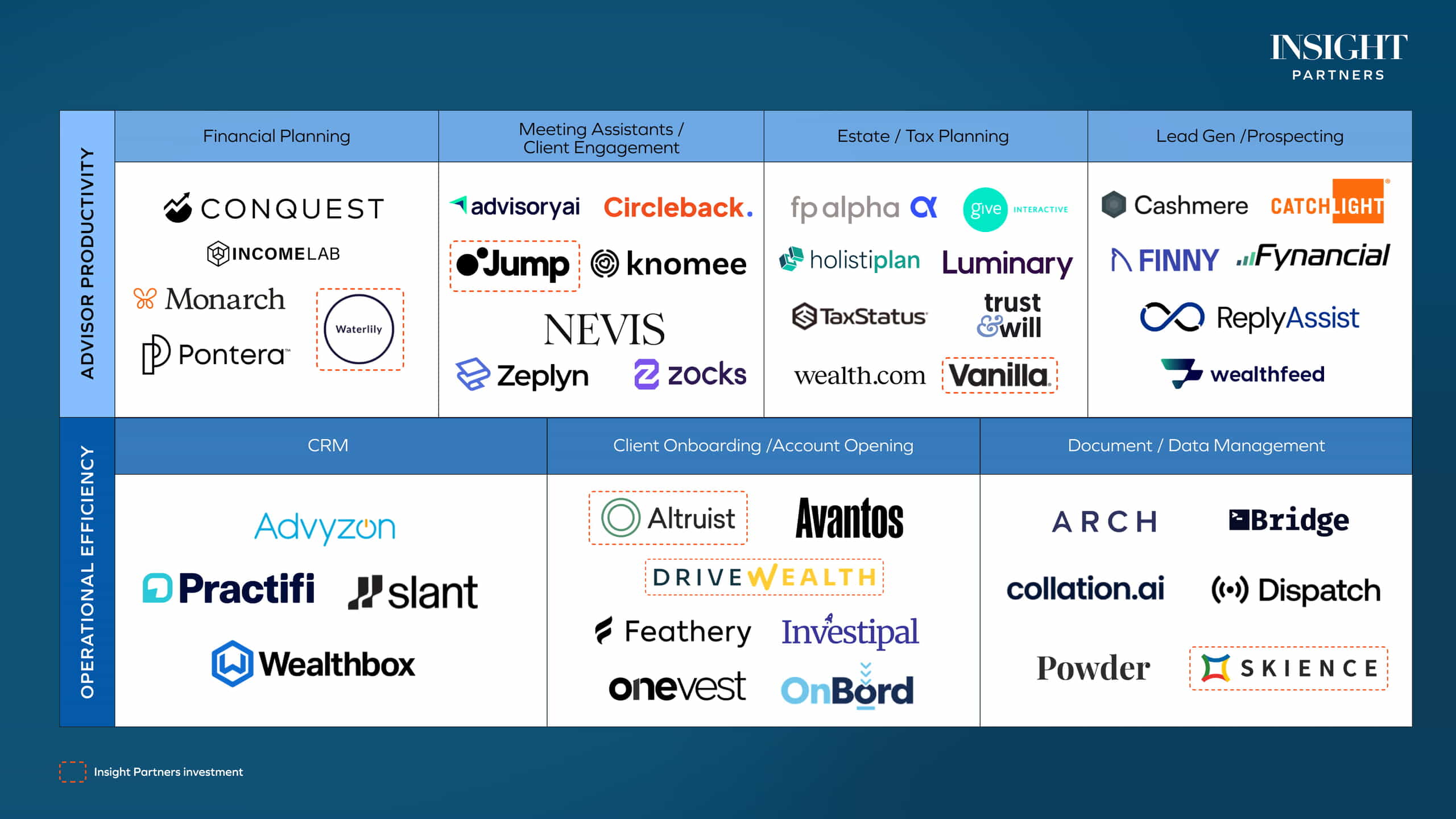

- The verticalized, siloed SaaS model of the last twenty years presents a challenge for AI Agents; the Next Stack requires a unified data foundation to scale.

- We are attempting to run the “logic of the future” on the “silicon of the past.” Closing the gap between biological efficiency (the squirrel) and current gigawatt computing is the primary engineering challenge of the decade.

- AI ROI is currently stalled by human factors, not technical ones. Moving from pilots to production requires incentivizing curiosity and solving “unsexy” hurdles like secure multi-tenancy.

These insights came from our ScaleUp:AI event in October 2025, an industry-leading global conference that features topics across technologies and industries. Watch the full session below:

More than just a faster cloud

For too long, the enterprise has treated the AI revolution like a software patch, an “add-on” layered over legacy systems. This is a profound misconception. AI isn’t just building a better chat interface; we are witnessing the birth of a fundamentally new architecture.

The prevailing mental model — that GPU compute is simply “faster cloud” — fails to capture the magnitude of the shift. We are moving away from deterministic, predictable computing that defined the spreadsheet era and toward a stochastic “AI operating system.” This emergence spans models, data middleware, and runtimes to enable compound systems.

From goods to tokens

Bjorlin frames the current shift as a transformation of the global supply chain. We are transitioning from the traditional manufacturing of physical goods to the “manufacturing of intelligence.” In this new “sovereign economy,” tokens have become the new steel or oil, a primary output that nations and corporations must produce to remain competitive.

“We’re moving traditional manufacturing of goods to the manufacturing of intelligence to the generation of tokens, it’s actually vital to sovereign economies.”

— Alexis Bjorlin, VP/GM, DGX Cloud, NVIDIA

This requires a full-stack approach that begins long before a chip is installed, encompassing power, land, and specialized data center design. The economic calculus has shifted from cost-saving infrastructure to revenue-generation infrastructure.

“[NVIDIA CEO Jensen Huang] will always say, you know, the more you spend, the more you make. So for a $3 million investment in compute over the following three years, you should be able to make $30 million in revenue on that,” says Bjorlin.

To support this, hardware is becoming increasingly specialized. NVIDIA’s recent announcement of CPX, a dedicated inference chip designed to disaggregate the memory-intensive pre-fill phase from the compute-intensive decode phase, highlights the move toward performance per total cost of ownership (TCO). When intelligence is a utility, the goal is global orchestration that optimizes every token produced.

Breaking the data silos

The current enterprise landscape is a graveyard of verticalized silos. Rao identifies this as a combinatorial problem: if a company uses 50 different SaaS tools, each with its own isolated data foundation, creating a unified AI context becomes mathematically impossible.

Rao advocated for a radical “desaassification” of the world. In the Next Stack, the application no longer owns the data. Instead, organizations must build a common data foundation where vertical applications sit as thin layers on top of a unified, governed pool. This is the only way to enable autonomous Agents.

Without breaking these silos, Agents lack the cross-functional context required to actually perform work.

Why AI hits a wall in production

While the industry is flooded with successful AI pilots, few make it to production. Webster identifies the “security tripwire” as the moment a model interacts with the application layer. The friction isn’t the model itself, but the risk of connecting that model to Personally Identifiable Information (PII) or executive functions.

For executives, security is no longer a perimeter problem; it is a supply chain and runtime problem. The Next Stack requires a heterogeneous security approach: a plan that spans from model provenance through the continuous integration and continuous deployment (CI/CD) pipeline and into the live execution environment.

[I]f you don’t have a plan for AI security that is full stack, you know from runtime all the way through to CI/CD pre-deployment and your model and its supply chain, then you’re going to have a hard time building any sort of sustainable applications on top of AI,” says Webster.

Without this full-stack security framework, the intelligence being manufactured remains too risky to deploy at scale.

Jevons’ Paradox

Rao pointed to energy as the ultimate bound on AI. He cites Jevons’ Paradox: the economic observation that as a resource becomes more efficient and cheaper, total consumption actually increases because it becomes more applicable to daily life. As the cost per token drops, demand for AI will explode, placing unprecedented pressure on global power infrastructure.

“There’s not a single computer we have that runs on a gigawatt that can do that performance right now.”

— Naveen Rao, Advisor, Databricks

At the core of the problem: We are running stochastic logic (probabilistic AI) on deterministic silicon (precise, bit-for-bit hardware). To reach the next order of magnitude in scaling, we must rethink the foundations of computing to run on the physics of the hardware.

Speaking to AI and energy efficiency, Rao offered a biological benchmark to illustrate his point:

“Go watch a squirrel for a moment…they can jump 10 feet branch to branch, land on something five millimeters wide, and do it a thousand times out of a thousand perfectly…they do that on a tenth of a watt. Their brain runs in less than a tenth of a watt. There’s not a single computer we have that runs on a gigawatt that can do that performance right now. So we are very, very far away from what biology is.”

The “unsexy” hurdles

When asked what “unsexy” problem would 10x AI adoption, the panel looked beyond the models to infrastructure and human behavior.

Secure multi-tenancy

Bjorlin argued that solving multi-tenancy is critical to enterprise ROI. Organizations must drive maximum efficiency and utilization in AI clouds while maintaining strict isolation. Without this, the unit economics of the “Intelligence Factory” don’t work.

The curiosity bottleneck

Clayton contended that the ultimate bottleneck is human, not technical. ROI is stalled because the workforce lacks incentives to change.

“Humans need to be curious…We have to give them the tools, we have to give them access to trustworthy data, and encourage them and incentivize them to use it. Because if humans don’t use it, we can build the coolest toys, and you’re not going to get the value you’re expecting,” she says.

The only limit is imagination

The building blocks of the Next Stack, from NVIDIA’s specialized inference chips to unified data foundations, are arriving. But the architecture is far from settled. We are in a transitional moment in which the programming model for the next decade is still being written.

The most provocative takeaway from the panel is that technology is no longer the primary constraint. The real programming challenge is the reimagination of business processes themselves.

As the stack settles, the only remaining limit may be our collective ability to imagine what is possible when intelligence is no longer scarce, but manufactured at scale.

Watch more sessions from ScaleUp:AI, and see scaleup.events for updates on ScaleUp:AI 2026.

*Note: Insight Partners has invested in Promptfoo.